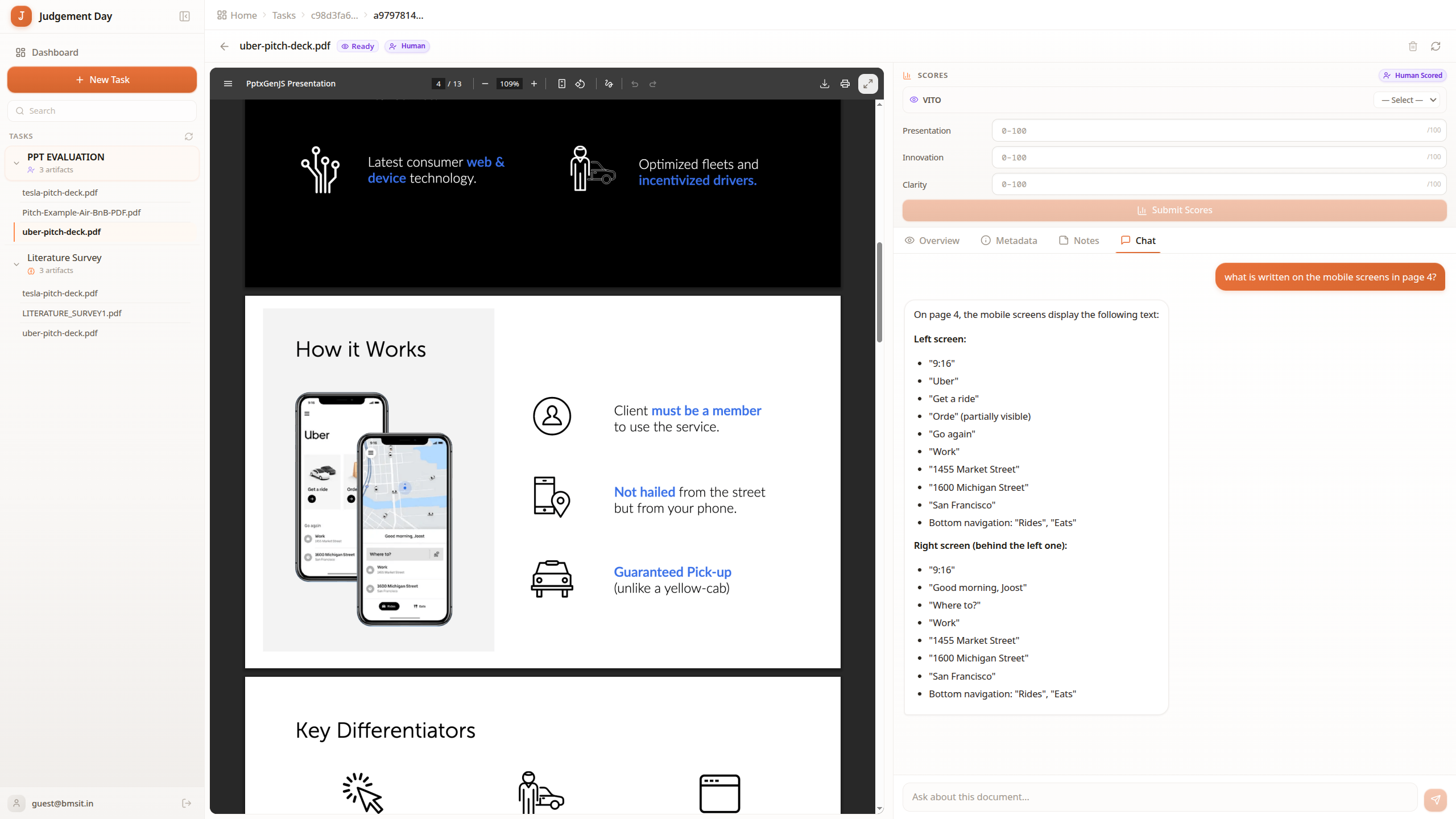

Evidor - AI-Powered Document Evaluation Platform

Started as Judgement Day, a single-Lambda document auditor that hit timeouts at scale. Evolved into Evidor by splitting into domain-specific Lambdas (auth, projects, tasks, artifacts, rubrics, eval), adding a dedicated eval engine on EC2 via Docker, and building two evaluation modes: lens (direct 1-10 scoring) and swiss (pairwise tournament ranking). The platform differentiates via subdomain -- hire.evidor.tech, pitch.evidor.tech, assess.evidor.tech -- each with tailored vocabulary but shared infrastructure.

Swiss-system tournament algorithms (Buchholz, Sonneborn-Berger, Bradley-Terry) for fair pairwise ranking. Static Next.js exports on CloudFront with catch-all routing and CloudFront Functions for SPA rewriting. Multi-subdomain architecture from a single S3 bucket with platform detection via hostname.

Built a multi-tenant evaluation platform serving three distinct use cases (hiring, pitch evaluation, assessments) from a single codebase across dedicated subdomains. Custom rubrics define scoring criteria, artifacts are evaluated via multimodal AI (Gemini processes raw document bytes), and results are ranked using Swiss-system tournaments with Buchholz/Sonneborn-Berger/Bradley-Terry tiebreakers -- the same system used in professional chess.

Evidor - document evaluation dashboard

§1. The Domain & The Problem

Evaluating large document sets (100+ resumes, 50+ pitch decks) requires structured comparison, not just individual scoring. Manual review doesn't scale, and simple AI scoring lacks consistency across documents. Organizations need both absolute scoring and relative ranking.

§2. The Architecture

Seven domain-specific Lambda functions behind API Gateway, an async eval engine on EC2 polling DynamoDB for queued jobs, S3 for artifact storage with presigned uploads, CloudFront serving a static Next.js export across three subdomains, and CloudFront Functions handling SPA routing + RSC data rewrites.

§3. The Evaluation Engine

Two modes: Lens (direct rubric-based scoring where Gemini evaluates each artifact against weighted criteria) and Swiss (pairwise tournament where artifacts are matched head-to-head across rounds, scored comparatively, then ranked using chess tournament tiebreakers). The engine processes raw PDF/PPTX bytes via Gemini's multimodal API -- no text extraction middleware.